Alicia Raimundo was surprised to find police officers at the door of her New York City hotel room a few summers ago. When they entered, they took away her sharp objects, and told her they believed she was at risk for suicide. Though the Toronto resident had tried to take her own life years before, she wasn’t struggling that day. What prompted the two-hour visit was her Facebook status. After she had posted the lyrics to a song by the emo band Billy Talent, an acquaintance flagged her post as suicidal.

The following winter, when Raimundo, who has anxiety, depression, and a binge-eating disorder, did post suicidal thoughts to Facebook, nothing happened. A friend messaged her the following day to let her know that they had reported her post and to ask how Facebook had intervened. The company hadn’t, Raimundo replied. She recalls the gist of her since-deleted post: “I feel like I failed you guys because I’m not feeling well, and it feels like a roller coaster and I’m just not sure it’s worth it anymore.”

“I was really sad because I posted this heartfelt confession of really feeling suicidal and taking my own life, and nobody [from Facebook] talked to me,” Raimundo says. “[The friend who flagged the post was] kind of disappointed that nothing had happened too.” (Facebook requested to see Raimundo’s profile URL to confirm her story. At Raimundo’s request, The Ringer did not provide it.)

Raimundo’s surprise at a lack of response from Facebook is expected. The social network has been a leader in online suicide prevention, first entering the space more than a decade ago. For years, CEO Mark Zuckerberg has frequently emphasized the company’s commitment to keeping users safe; a sizable chunk of his 6,000-word February manifesto is dedicated to suicide prevention and the network’s other safety features.

Raimundo, a 27-year-old mental health advocate, has had suicidal thoughts since she was a teenager. Talking to people about her struggles in real life hasn’t always been her best option; a teacher she confided in once suggested she was “crazy,” and as a kid she was too ashamed to tell her parents, thinking her problems paled in comparison with those they faced as immigrants from Portugal. That’s why sharing her feelings — positive and negative — with her Facebook friends is important to her, and why it was difficult to discover that the social network’s prevention tools sometimes failed. She has a real-life support system to lean on now, but knows not everyone is so lucky. In high school, she lost four friends to suicide.

The last few steps people take between experiencing suicidal thoughts and trying to take their lives often unfold within a matter of hours — rather than weeks or months — and thus what vulnerable users post on social media serves as a critical, real-time window into their health and safety. Social media use continues to grow in the U.S. and abroad, and an ever-growing number of people have outlets to update their networks on their mental health challenges in real time. At the same time, the giants of social media are increasingly showing a willingness to strengthen their prevention efforts — and if those efforts yield improvements, they could save more lives.

More than 40,000 people in the U.S. and close to 800,000 people worldwide die by suicide each year. In 2013, in partnership with mental health experts, platforms including Facebook, Twitter, Tumblr, and YouTube adopted the same best practices. Users on virtually every major network can report behavior they think may be suicidal and have access to a public statement about suicide and self-harm policy. In recent years, Tumblr has bolstered its efforts further to support at-risk users by streamlining the process to report suicidal content, connecting people to mental-health support resources, and running a campaign to reduce the stigma linked to mental illness. Instagram, which is owned by Facebook, launched its reporting tool in 2016 and goes beyond most other networks by also explicitly addressing content related to eating disorders.

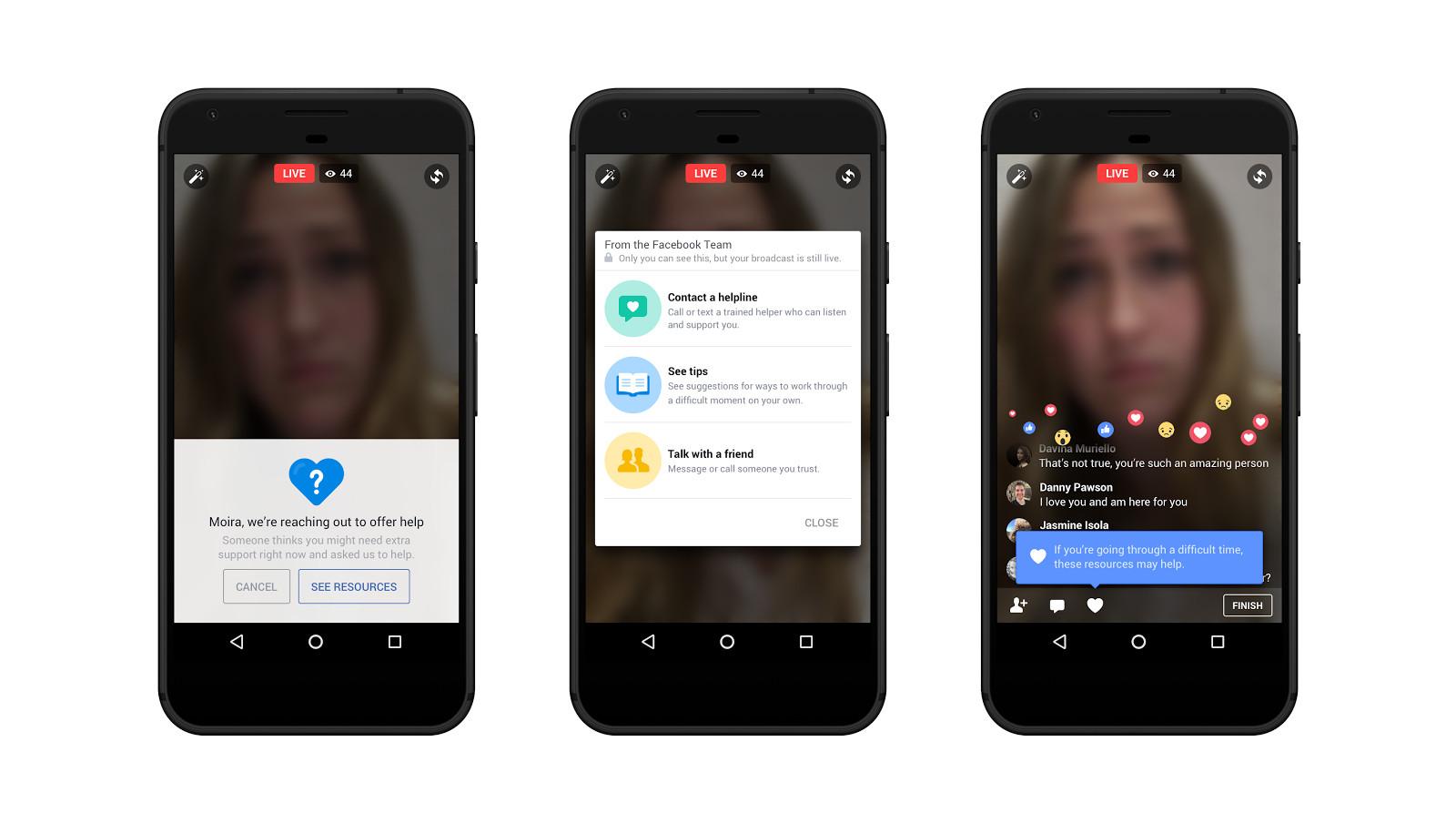

In March, Facebook announced that it would begin using artificial intelligence to more quickly detect potentially suicidal posts and Live videos. The news followed a string of suicides livestreamed on the service. The automated prevention method, which the company is currently testing in the U.S., makes reporting options more prominent on posts and Live videos that the algorithm has identified as coming from a user at a higher risk for suicide. In the most urgent cases, the system will pass along to members of Facebook’s community operations team a post by a user who may be in danger, even if none of their contacts have manually reported it. Facebook presents at-risk users with a menu of pop-up options, including connecting with the company’s suicide-prevention partners through Messenger, contacting a friend, and accessing tips to work through a difficult situation on their own.

Using machine learning to better track and address users’ mental health is a promising step for Facebook and other social networks, and it builds off the efforts that smaller, peer-support-based apps and Google have already implemented to find people in danger and offer them quick help. Navigating suicide prevention online effectively is crucial but challenging. Facebook and its peers have and will face difficulties and limitations.

Researchers can glean a lot about mental health from missives as short as 140 characters. Qntfy, a company that aims to noninvasively collect and share data with researchers, clinicians, and patients to improve mental health care, analyzed tweets of people who would go on to try to take their own lives and a control group for indicators that a user may be considering suicide. People at risk, says Qntfy founder and CEO Glen Coppersmith, tend to express more emotion, include fewer emoji and emoticons, and use “I” more and “we” less than their peers. Vulnerable users also tend to tweet more between the hours of 1 and 5 a.m., suggesting they probably have a disrupted sleep schedule, which can put someone at risk for suicide.

Although Facebook won’t divulge its algorithms (the company spoke to The Ringer on background but declined to comment on the record), Coppersmith guesses the site would identify patterns like these across different demographic groups like race and gender to determine who might be contemplating suicide.

“I think this is a really smart move, and they’re one of the few companies that could actually significantly move the needle on this in that particular way because they have access to all this data,” Coppersmith says. He sees social media posts as opportunities to track users’ thoughts and actions in real time in a way that hadn’t been possible before.

Dan Reidenberg, the executive director of SAVE, a nonprofit advocating for suicide awareness and education, has long partnered with social media networks on the issue. He says one of the first tasks he helped Facebook with years ago was identifying phrases that people tend to use when expressing suicidal thoughts online (“I just want to be dead,” “I want to kill myself”), rather than isolated keywords like “suicide,” which can turn up a lot of false positives and make it harder to home in on users who need help. In addition to consulting with experts like Reidenberg, Facebook solicits feedback from people who have experienced suicidal ideation and people who have lost friends or family members to suicide.

Using artificial intelligence to track language for potentially suicidal content isn’t a new strategy. In 2013, Durkheim Project researchers, through an opt-in program run in collaboration with the Department of Veterans Affairs, began tracking Facebook, Twitter, and LinkedIn posts from U.S. military veterans in an effort to predict what language may best signal suicidal ideation in users. Bark is an online service that uses AI to alert parents via text or email to signs of mental health concerns or potential dangers in their children’s social media posts (apps like Facebook, Snapchat, and Kik are supported), text messages, and emails. Parents can register, ask their children to integrate Bark’s tracking app on their iPhone or Android, and then monitor any sensitive content through Bark’s apps for parents or their website.

The personal connection that apps like Bark offer can be crucial to getting people the help they need and directly involving their support systems, going far beyond sending automated resources to users who may wish to sidestep them, or who may be startled by communication from a robot. Zachary Mallory, a Human Rights Campaign youth ambassador, volunteers with the Crisis Text Line, a nonprofit that offers suicide prevention support via text message. “Now, I know from experience, sometimes in the middle of a crisis-counseling shift, the funniest questions you ever get asked are, ‘Are you a robot?’” says Mallory, 20, who has borderline personality disorder and has tried to take their own life four times. (Mallory identifies as genderqueer and nonbinary and uses they/them pronouns.) “I feel like more people are going to be more responsive to an actual human than they will be to an automated response.” Both Mallory and Raimundo report other people’s Facebook statuses for suicidal content when they find it necessary — but not before reaching out to the user with a personalized message about their concern and a gentle warning that they might report the post.

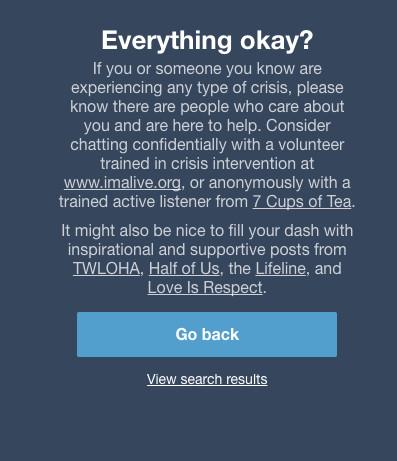

Peer-support apps like 7 Cups of Tea and Koko offer human interaction and support that occupies a middle ground between asking a real-life friend or family member for help (which some people may not feel comfortable doing) and seeking out professional treatment (which some people can’t afford). 7 Cups connects each user who struggles with mental illness or another source of stress with a peer who can offer support in a secure phone call. Similarly, Koko users post about an issue they’re dealing with, and others respond in the app, offering suggestions for how to rethink the situation in a more positive light. The users volunteering their time for strangers aren’t paid or necessarily trained professionals. “This peer support space is a very interesting one, because as far as we can tell it’s both effective and scalable in a way that neither friends nor clinicians are,” Coppersmith says. Vulnerable people may also benefit from supporting their peers through the same apps by putting the cognitive behavioral techniques they’ve learned into practice.

Increasingly, platforms have partnered with apps to offer vulnerable people a more personalized connection. Facebook’s partners include SAVE, National Suicide Prevention Lifeline, Forefront, and Crisis Text Line. Similarly, Tumblr refers users who are reported for suicidal content or who search for hashtags like #depression, #self-harm, and #suicide to resources including 7 Cups, and, since December 2016, mobile users are referred out to Koko.

In 2014, transgender teen Leelah Alcorn posted her suicide note to Tumblr before taking her own life. Her death prompted a push for greater acceptance of transgender people and a call for Tumblr and other social media sites to better monitor suicidal posts. The next January, Tumblr released a standard form users can submit to flag suicidal content, among other dangerous posts, for the site’s safety team and followed that with a May 2015 campaign to counter mental health stigma.

“[Alcorn’s death] maybe reminded us about how important it is for us to be involved in this space,” says Nicole Blumenfeld, Tumblr’s director of trust and safety. “It also ended up leading very much so to initiating the Post It Forward campaign, where we wanted to be a little bit more proactive in encouraging users to talk about these issues, to build a community around these issues and kind of feel like they’re in a place where they can talk about these things.”

There’s still a long way to go in online suicide prevention. On networks like Twitter and Reddit, the strongest efforts still come not from the companies, but from users themselves. Reporting systems exist, but they can be confusing to navigate; on Twitter, for example, reporting a post for suicide or self-harm means you have to first mark it as “abusive or harmful,” lumping it in, and therefore risking stigmatizing it further, with issues like harassment and threats of violence toward others. Also, Facebook and other livestreaming platforms have a history of leaving up suicidal footage, in one case for as long as two weeks.

Like human intervention, machine-learning progress from Facebook and elsewhere won’t be perfect, especially not at first. “[Facebook is] going to take care and think about people’s lived experiences and take input from them,” Coppersmith says. “I don’t think they’re going to get it right the first time, but we shouldn’t beat them up about that. They’re taking a pretty severe risk here.”

The algorithms will need fine-tuning. Though researchers are learning more and more about what factors hint that someone may be prone to suicidal ideation, there are bound to be surprises. He also wonders if Facebook plans to specifically target high-risk groups, like middle-age white men.

Another challenge for Facebook and its peers will be intervening in a way that doesn’t infringe upon users’ privacy. The Samaritans charity started an app in 2014 called Radar designed to alert users when their friends posted tweets indicating they might be at risk. It backfired. A Change.org petition calling on Twitter to shut it down read: “While this could be used legitimately by a friend to offer help, it also gives stalkers and bullies [an] opportunity to increase their levels of abuse at a time when their targets are especially down.” The Samaritans suspended the app and apologized less than a month after its launch. “It’s pretty clear that the public does not think that that is a great idea to sort of give the ability to anyone to sort of find out if someone might be at risk for suicide,” Coppersmith says.

Janis Whitlock, director of the Cornell Research Program on Self-Injurious Behaviors, says machine-learning systems on Facebook and elsewhere may startle some users and discourage them from seeking help in the future. “It could be like, ‘Oh my god, I’ll never say that again. I’ll never out again because I don’t want to look this way to the world. I didn’t realize that’s what I was putting out there.’”

What users are putting out there is information that networks can keep and use for other purposes. “You have to kind of wonder as these companies collect more and more data on people, especially potential behavioral health states, will they target you with pharmaceutical companies’ advertising to see your doctors about antidepressants, or will it be more guided things in the suicide realm, like health groups and suicide prevention?” says David D. Luxton, a University of Washington School of Medicine assistant professor who specializes in studying mental health applications for artificial intelligence. “I can understand being protective of proprietary technologies, I get it. But it’s scary what they’re doing with your information and your life and your data.”

It’s difficult for outside experts to evaluate Facebook’s efforts and point out any red flags without knowing how its algorithms work — and the company is unlikely to share details of its results, or even announce if it’s testing its prevention methods. “If you don’t study the technology or the intervention that uses the technology with clinical trials and randomized trials, it’s very difficult to really assess how effective it was. So as Facebook does this, how do we really know the impact?” says Luxton, who consults for Bark and serves as chief science officer for the suicide prevention nonprofit Now Matters Now. “We may know the count of people who maybe make it through or talk to the chat service or the crisis line, or click through, but we don’t fully know how effective it was.”

One alternate way to address suicidal content online, a popular but misguided suggestion goes, is to simply stay off social media if you’re mentally ill — away from negative influences like cyberbullying, FOMO, and carefully curated online presences that lead you to believe your friends lead perfect lives. “People with mental illness are people too. They deserve to post their food selfies and talk about their lives on social media,” Raimundo says. “Anyone who says, ‘Oh, you shouldn’t talk about that on social media or you shouldn’t have a social media account’ … they’re kind of asking for someone to do something to make them more comfortable rather than actually trying to help someone who’s hurting.”

Mallory says they frequently post Facebook statuses about their experiences living with mental illness to their large following. Oftentimes, those posts get flagged, typically by people Mallory doesn’t know well, even when they’re intended to be positive and uplifting. “Just because I have a mental illness does not mean I don’t need to be on social media,” they say. “You’re preaching hate, why are you on social media?” Recently, a Facebook status of Mallory’s might have saved their own life. When they posted about feeling suicidal, their boyfriend saw it and texted them. Mallory called him at 3 a.m., and their boyfriend talked them out of trying to kill themselves.

But just because social media networks are in a position to help doesn’t mean they have an obligation to. “I have been, over the last decade, incredibly humbled by the fact that these companies have all universally taken this on and said this is important,” Reidenberg says.

Facebook’s commitment to testing new suicide prevention tools is promising, but it’s not the only way social media networks can pitch in. If competing platforms work together, which they’ve shown flashes of by attending summits cohosted by Facebook and SAVE every two years, they may be able to help vulnerable people even more. Coppersmith points to the December announcement that Facebook, Microsoft, Twitter, and YouTube would team up to fight terrorist propaganda by sharing hashes, or digital signatures, of images and videos they’ve removed from their platforms with each other to prevent the spread of recruiting messages from ISIS and other terrorist groups. “I’m optimistic and I’m hopeful that we’re going to see something like this for suicide in the next couple of years,” Coppersmith says. “We are at the precipice of revolution here,” Coppersmith says. “I really think we’re going to find out more about mental health in the next five years than we have in the last, I don’t know, 50 or 500.”

Though Facebook’s suicide reporting tool wasn’t helpful when she needed it and a nuisance when she didn’t, Raimundo is optimistic about the role social media networks can play in suicide prevention going forward, as they develop more robust tools.

“Facebook, Tumblr, Twitter, Instagram, you know, they didn’t 100 percent think that this would happen with their platforms when they were setting up, so I’m really glad to see them step up rather than trying to make an argument that it’s not their place,” she says. “Because, you know, it’s happening. It’s going to continue to happen, so they might as well put the money and energy and manpower behind making sure that people get the right help.”