Snapchat’s Lenses is one of the app’s most beloved features, but it has endured no shortage of controversy. The app’s tool captures a live read on your face and morphs it, using filters. The puppy face filter? Adorable. Unfortunately, Snapchat has also included a blackface filter (an ode to Bob Marley), and more recently a yellowface filter (an ode to… anime?). This comes on the heels of concerns that Snapchat was taking heavy inspiration from artists to create other lenses.

But Snapchat is far from the only face-filtering app guilty of racial faux pas. One low-grade app called FaceMontages includes quite a few insensitive filters. There’s one that adds traditional Native American accoutrements, complete with a feathered headdress; there’s “Eastern Stories,” which visualizes the user as a Middle Eastern princess. Then there’s Snap Face, a sticker app that has a variety of sombreros for your selfie. The blatant Snapchat copycat Snow has a filter that turns users into a black man sporting a flattop and gold chains. And then there are the various Afro cam apps.

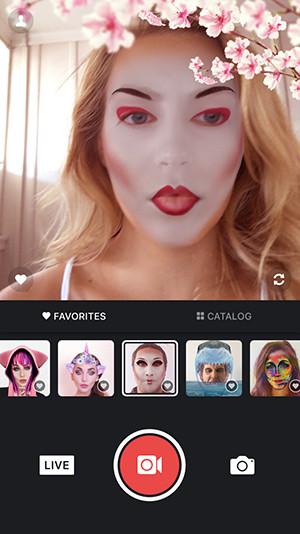

Next to Snapchat, MSQRD is the most popular live-filtering selfie app. And right now, the app — which was recently bought by Facebook — includes a geisha filter. The filter paints your face white, gives you almond-shaped eyes, and adds a cherry blossom branch to the frame. It’s a digital approximation of cultural appropriation. “When someone dresses up as a geisha without even attempting to understand what geisha do or what geisha mean within the context of the culture, it’s relegating both geisha and, if seen as ‘Japanese,’ Japan to meaningless. It’s saying that Japan is, essentially, trite,” says Elisheva Perelman, a history professor at the College of Saint Benedict & Saint John’s University who focuses on Asian history.

“Appropriation occurs when the symbol is seen as somehow separate from its meaning, as in hipsters wearing native headdress,” says Perelman. “To do something because it looks cool or funny, rather than to seek to understand its significance, is to appropriate. Snapchat filters don’t teach users about the cultures they appropriate; rather, they allow users to create a fake look for purposes of comedy and narcissism.”

The internet has a long history of cultural appropriation via face-filtering. The University of St. Andrews Perception Lab (which had been experimenting with face-morphing tools since the 1990s) started putting the Face of the Future face transformer online in the 2000s. It’s a web-based tool that allows you to see what you will look like when you’re older, or what you would look like as the opposite sex, or drunk, or as half ape. It was basically a predecessor to those wildly popular weight- and aging-booth apps. It also allows you to see what you would look like as a different race. (The site includes a disclaimer: “The Face Transformer is a fun toy only, and is not guaranteed fit for any purpose, implied or otherwise.”) News stories at the time of the tool’s release mostly described it as playful.

The smartphone led to an explosion of selfie filters. In 2013, Google made an effort to curb the problem of apps that could be perceived as making light of racial or cultural identity. The company removed a handful of apps from its store after thousands of users petitioned them to do so. Titles like “Make Me Asian,” “Make Me Russian,” and “Make Me Indian” were wiped from its catalog. The apps lacked Snapchat’s and MSQRD’s live face-reading feature, instead just taking still photos and adding elements like a Fu Manchu mustache to a selfie.

Of course, our fascination with what our faces say about who we are predates the internet. A 2005 study researched how infants respond when looking at faces of different races. Up to a certain age, the babies focused on all faces equally — at 3 months, things started to change: White infants looked at the faces of white people longer (which the researchers attributed to familiarity and not prejudice). Another study from 2006 looked at the same phenomenon, and found similar results, until they ran the test with African Israeli babies — a group that is predisposed to seeing more ethnicities on a daily basis. This group showed no preference. The conclusion was that an “exposure to greater ethnic diversity can reduce same-ethnic preference.” (You can read an analysis of both of these studies here.)

Same-race preference becomes more ingrained as we get older. As children, faces and ethnicity are particularly interesting. Children often experiment with changing their look, like by applying face paint and playing dress-up, and while some of this curiosity lands solidly in cultural appropriation territory (sure, that feather headdress on your toddler might seem cute, but …), it’s often more innocent than when it happens later in life, when you start to understand costumes as a joke, and know how those jokes can hurt. That’s when experimentation becomes exploitation.

Selfie apps have become our new costumes. It’s easy to snap a photo and change nearly everything about the way you look. Purple hair, dog nose, princess crown, whatever. The investment is low; the ease of use is high. But as with costumes, one foolish move can become a racist caricature. Wilfrid Laurier University’s “I Am Not a Costume” campaign highlighted how certain Halloween outfits appropriated specific cultures, and suggested that what might be innocent fun to some can be hurtful to others.

Now, apps are making it even easier to do exactly that. In an article for the Snapchat-sponsored Real Life Mag, Sharrona Pearl wrote about the performative aspect of face-swapping. “It’s experimental yet obviously reversible, with self-mockery and ironic distance built it,” she writes. “It lets us show our willingness to play with different levels of control over our self-image. We give up our face as a way of being ourselves.” In these more specific instances, there is a dark side to this rosy self-exploration. She goes on to point out that face-swapping and filtering can accomplish the same negativity that cultural costumes do. “Face swaps permit appropriation without the lived inconveniences of inhabiting them, carrying them around,” Pearl writes.

Essentially, these apps offer what users might see as a fun, lightweight way to change your race, to use another group’s ethnicity as a costume. And you can do it anytime, for free (or a couple of bucks), any night of the year. While people aren’t drunkenly parading another costumed ethnicity down the street, they are sending them to friends, or publicly posting them for anyone to see. They’re even more present — more visible — than they would be on Halloween night.

Users are struggling with (or, in some cases, laughing at) the ethics of race-swapping.

I asked Pearl about the effects of digital “race-swapping,” and if “trying on” an ethnicity via an app was OK — so long as you didn’t post it, or perform it. “[These] questions speak to the heart of the issues around cultural appropriation … Is it racist for a white person to wear dreadlocks? Is appropriation in and of itself always problematic, given how much cultural borrowing there always is, or is the issue more one of attribution and lack thereof? Does the visualization piece make it more or less complicated?”

Pearl says much depends on intention and use — some err on the side of “costuming and play” and “genuine curiosity.” Others might lead people to assume part of an identity.

Most sensibly, she pointed out that the key to race-swapping filters is personal interpretation. “It’s one thing to try on a different skin color,” she says. “It’s another to then laugh at it, and still another to imagine you now know something about that experience.”